Large Language Models (LLMs) have arrived with a bang. They are taking many areas of work by storm, and writers in particular feel the heat.

The Good

Let’s start with the positive: Generative AI has the potential to help writers massively. It can make a writer see different perspectives. It can support them with handling mundane tasks in the writing process. It can even ignite a creative spark in writers. In fact, there are many areas where AI can be like Robin to the writer's Batman: A sidekick helping the main act to shine ever more brightly with their work.

The Bad

And then there is the big BUT: AI can, of course, also write entire texts from start to finish. And these text can be given to clients or employers without telling them that it’s been an AI engine that wrote the piece.

I’m not talking AI-assisted work here. That's been around for a long time, and tools like Grammarly are commonplace and often very helpful. Instead, I’m talking entirely AI-generated content.

Since ChatGPT arrived, this has reached a truly new level: The initial impression of an AI generated article is often good enough. Don't get me wrong, clients and employers will eventually notice: AI still writes in a bland, generic and non-fact checked way, making for a less than stellar reading experience.

However, it's not easy to notice at first glance. Detecting AI-generated articles requires time and effort by clients and employers. And it instills mistrust.

Even though very few professional writers would ever hand in a piece written by AI without saying so, the fear on the demand side is there: "If it's so easy to let AI write an article, and if it's not easy for me to check whether it was AI generated, how can I ever be sure it's not?"

This is becoming a real problem real fast.

"With freelancers in panic of losing their jobs and clients frustrated with AI-written work, ChatGPT has thrust the freelance world into disarray."

- How AI Is Upending The Freelance World, Forbes

The failure of AI checkers

Of course, there are plenty of tools out there that promise they'll detect whether a text was written by a human or not. They often claim a success rate of up to 98%. They've often been around long before the ChatGPT release.

And that's exactly the problem: ChatGPT changed the rules of the game. The very developers of ChatGPT, a company called OpenAI, can only detect AI generated texts in 26% of the cases. That's less than one in three. And that's by the very people who created the AI.

"Our classifier is not fully reliable."

Open AI, creator of ChatGPT, on their detection

In other words, AI detection is close to useless when it comes to the new generation of text generators: Language Models became so good, technically it’s very hard if not impossible to tell whether an article has been written by AI or not.

That is, neigh impossible when using a general AI detection model that works for everybody.

But what if everybody had their own custom AI detection model, only trained on the content they’ve created in the past?

General vs. custom models

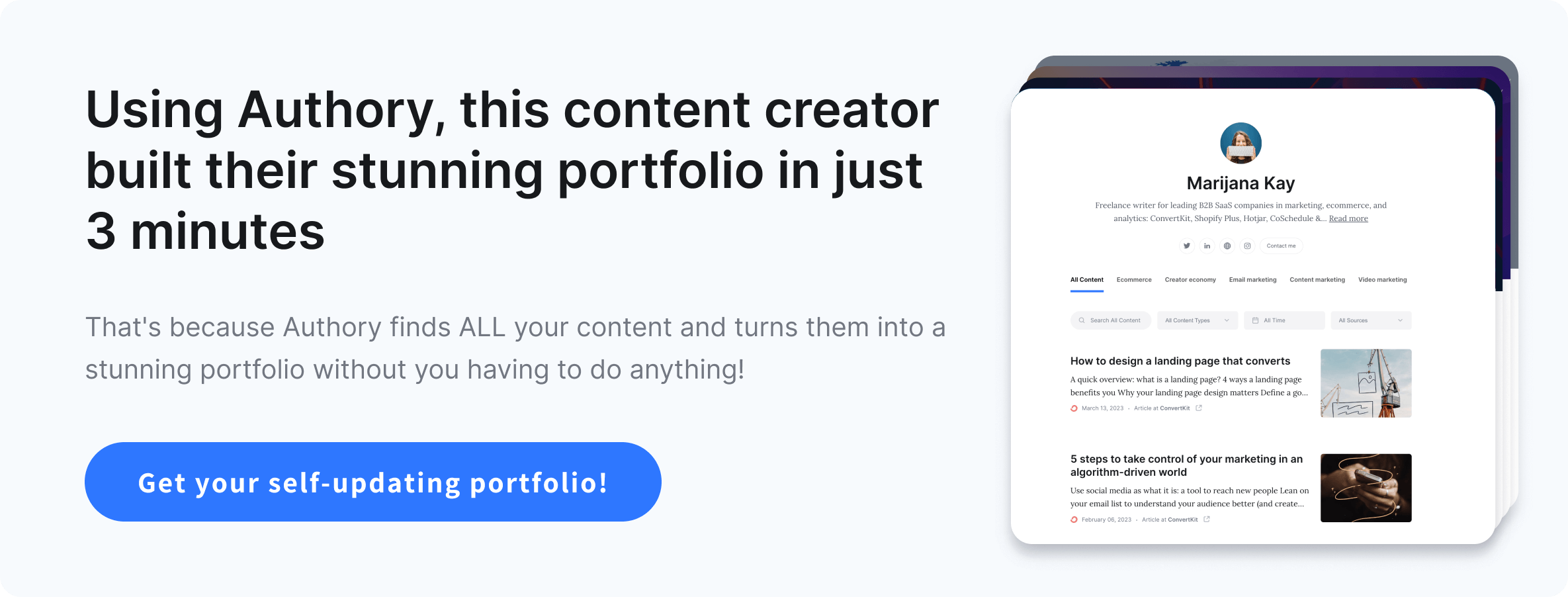

Authory is a platform that finds and stores all the content our customers create, no matter where they’ve published it, and no matter when. We essentially keep a copy of the entire body of work for our customers. Nowadays, Authory stores millions of articles, and the median number of articles per customer is at around 200 pieces.

With this detailed data, we can create a custom AI detection model for each and every of our customers. And this helps our customers prove the authenticity of their work.

Here is how it works: If a customer writes a new piece, and they want independent proof that it's not been written by AI, we don't only have to look at said piece in isolation. We can compare it to 200 other pieces by the same customer.

We use this trove of data to compute a unique “style fingerprint” for each and every of our customers. This fingerprint takes into account thousands of different parameters. As a matter of fact, we create dozens or even hundreds of small fingerprints per customer, which are then turned into one big one.

Of course, doing AI checks for customers on a "per piece" basis is not feasible. They want general proof that their texts are authentic.

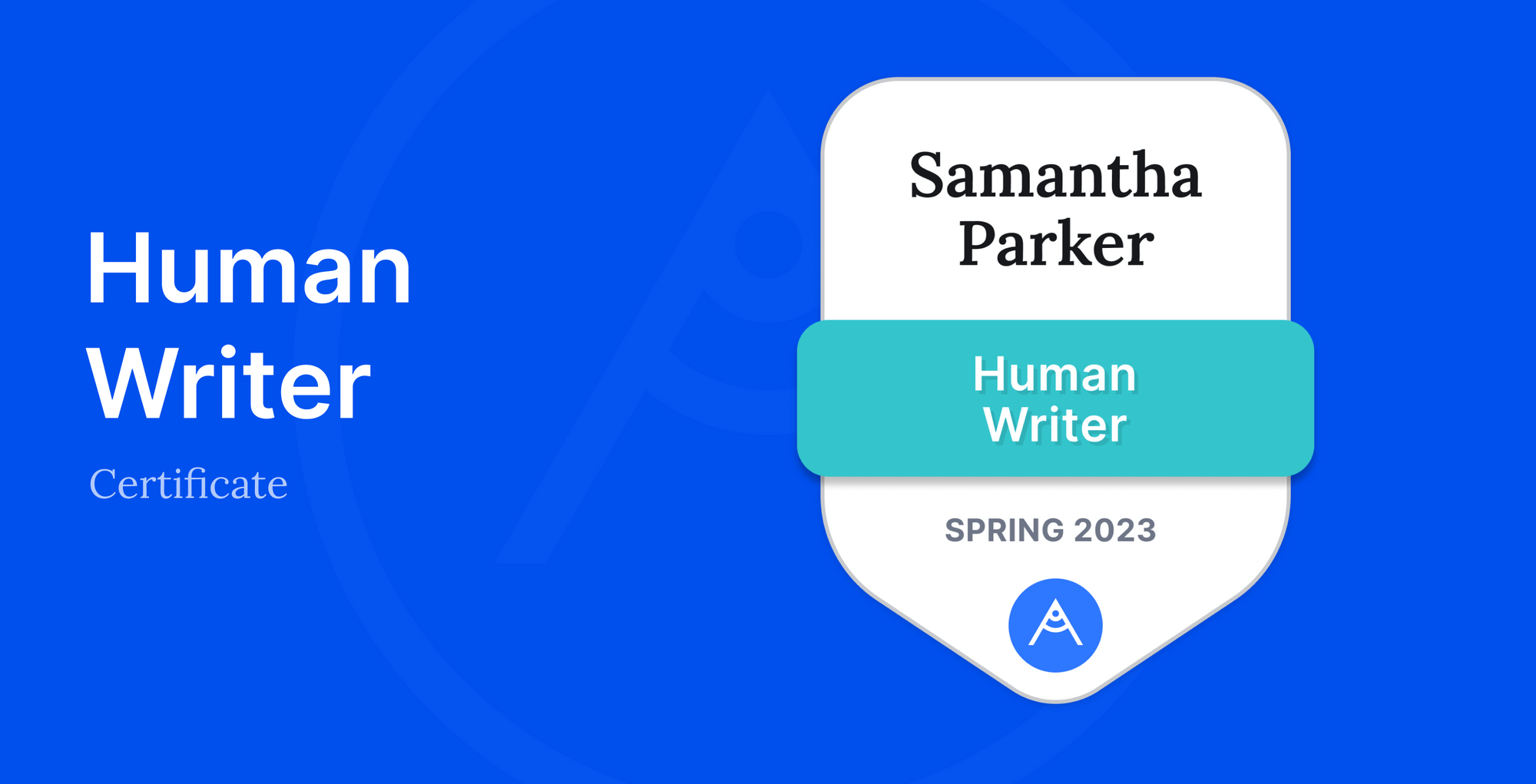

That's why on regular basis, Authory checks all the new articles a customer has written, and we compare them to their existing style fingerprint. If we find a match with a reasonable degree of certainty, we award the customer the Human Writer certificate.

The result is exactly what writers need: An independent proof of their authentic way of writing. The certificate provides credible evidence that the respective customer’s articles are with a high degree of certainty written by a human, not by artificial intelligence. It’s a seal of quality that they can proudly use when interacting with existing or prospective clients or employers.

Learn more about the Human Writer Certificate:

- Frequently Asked Questions

- Example Certificate

- What you can do with your certificate

- The Technology behind the Human Writer Certificate